Abstract

Generative modeling, which learns joint probability distribution from data and generates samples according to it, is an important task in machine learning and artificial intelligence. Inspired by probabilistic interpretation of quantum physics, we propose a generative model using matrix product states, which is a tensor network originally proposed for describing (particularly one-dimensional) entangled quantum states. Our model enjoys efficient learning analogous to the density matrix renormalization group method, which allows dynamically adjusting dimensions of the tensors and offers an efficient direct sampling approach for generative tasks. We apply our method to generative modeling of several standard data sets including the Bars and Stripes random binary patterns and the MNIST handwritten digits to illustrate the abilities, features, and drawbacks of our model over popular generative models such as the Hopfield model, Boltzmann machines, and generative adversarial networks. Our work sheds light on many interesting directions of future exploration in the development of quantum-inspired algorithms for unsupervised machine learning, which are promisingly possible to realize on quantum devices.

1 More- Received 27 September 2017

- Revised 23 April 2018

DOI:https://doi.org/10.1103/PhysRevX.8.031012

Published by the American Physical Society under the terms of the Creative Commons Attribution 4.0 International license. Further distribution of this work must maintain attribution to the author(s) and the published article’s title, journal citation, and DOI.

Published by the American Physical Society

Physics Subject Headings (PhySH)

Popular Summary

Given a set of statistical data, how could one construct a model that is able to describe the underlying probability distribution and to generate new samples from that distribution? Generative modeling, one of the central tasks in machine learning, provides a method. Quantum generative modeling, exploiting the inherently probabilistic nature of quantum states and their superior representation power, is currently being explored as one of the quantum machine learning approaches. Here, we develop a quantum generative model and examine its abilities on several standard data sets.

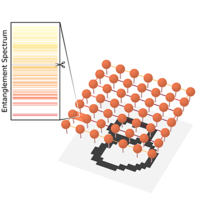

Our model encodes a probability distribution into the squared norm of a matrix (tensor) product state (MPS), a potent representation for entangled quantum states. The components of the tensors in the matrix product state are then the parameters that the training algorithm in the generative modeling process must determine from the data. This quantum-mechanics-inspired representation allows us to combine methods from both quantum physics and machine learning to gain advantages in both training and sampling. The training algorithm not only tunes the values of the model parameters but also adaptively adjusts their allocations in the tensors—a flexibility enabled by the MPS tensor network representation. Our model also has a direct sampling algorithm that generates independent samples more efficiently than the conventional statistical-physics-inspired approaches.

Probabilistic modeling using quantum states, bringing together ideas from machine learning and quantum physics, has the potential to be adopted in several areas such as quantum information, quantum computing, and many-body physics. Perhaps the most exciting possibility is the implementation of a quantum generative model in an actual quantum device rather than its simulation on a classical computer. Our approach is a step towards this goal.