Abstract

Much of human knowledge sits in large databases of unstructured text. Leveraging this knowledge requires algorithms that extract and record metadata on unstructured text documents. Assigning topics to documents will enable intelligent searching, statistical characterization, and meaningful classification. Latent Dirichlet allocation (LDA) is the state of the art in topic modeling. Here, we perform a systematic theoretical and numerical analysis that demonstrates that current optimization techniques for LDA often yield results that are not accurate in inferring the most suitable model parameters. Adapting approaches from community detection in networks, we propose a new algorithm that displays high reproducibility and high accuracy and also has high computational efficiency. We apply it to a large set of documents in the English Wikipedia and reveal its hierarchical structure.

2 More- Received 21 May 2014

DOI:https://doi.org/10.1103/PhysRevX.5.011007

This article is available under the terms of the Creative Commons Attribution 3.0 License. Further distribution of this work must maintain attribution to the author(s) and the published article’s title, journal citation, and DOI.

Published by the American Physical Society

Popular Summary

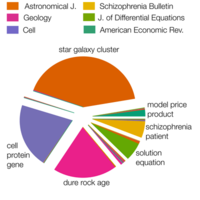

A significant fraction of data that are currently being generated and stored is in the form of unstructured text. Researchers use topic modeling algorithms to automatically classify text documents into topics in text recommendation systems, digital image analysis, spam filtering, and high-dimensional data mining with the goal of recording and extracting relevant data. With the goal of enabling intelligent data searches, we study synthetic and real data, whose topics are known, to evaluate the performance of state-of-the-art algorithms. We show that current optimization techniques often have trouble yielding accurate and reproducible results, particularly if the topics are heterogeneously distributed. By borrowing methods from graph clustering, we propose a novel optimization method with high accuracy and reproducibility and no computational overhead.

One state-of-the-art algorithm for classifying text-based data is latent Dirichlet allocation, a means of assigning topics to documents. We measure its performance using specific synthetic data with known topics. We find that the algorithm is not able to detect the ground-truth topics because of the roughness of the likelihood landscape. We propose a novel technique that builds a network of co-occurrent words and finds topics starting from clusters of words in that network. We show that our method is more accurate, both in synthetic cases and in a real-world case of documents from the journal Science.

We expect that our results will yield new ways of automatically classifying text documents, a process that is particularly relevant given the growth of electronic, searchable data.